Artificial intelligence (AI) has evolved from a speculative concept to a transformative force reshaping industries, societies, and human potential. Within the AI landscape, two terms often spark debate: Artificial General Intelligence (AGI) and Artificial Superintelligence (ASI). While both represent advanced forms of AI, they differ significantly in scope, capability, and implications for humanity. This article explores these differences, drawing on insights from technologists and researchers to clarify their meanings, potential, and risks. By examining their definitions, capabilities, development timelines, and societal impacts, we aim to provide a well-rounded understanding of AGI and ASI.

The artificial intelligence landscape is rapidly approaching two pivotal milestones that will fundamentally reshape human civilization: Artificial General Intelligence (AGI) and Artificial Super Intelligence (ASI). While these terms are often used interchangeably in popular discourse, they represent distinctly different stages of AI development with profoundly different implications for humanity’s future.

Current AI models, like Open ChatGPT, DeepSeek Chat, Perplexity Chat, are narrow AI: They don’t truly “understand” context, adapt across domains, or transfer learning broadly.

Artificial General Intelligence (AGI), sometimes called “strong AI” or “human‑level AI”, refers to systems that can perform any intellectual task that a human can, such as reasoning, learning, planning, language, creativity, even common‑sense judgment.

In contrast, Artificial Superintelligence (ASI) is a hypothetical level well beyond AGI. It is an intellect that far outperforms humans in every meaningful way: creativity, scientific discovery, strategic planning, social skills. In other words, ASI isn’t just faster; it’s smarter in ways we may not even grasp.

Nick Bostrom, the philosopher, defines superintelligence as: “any intellect that greatly exceeds the cognitive performance of humans in virtually all domains of interest.”

What AGI Looks Like

By most accounts, real AGI remains unachieved, but leading large language models (LLMs) are edging closer. OpenAI researchers argue that GPT‑4 exhibits “sparks of artificial general intelligence”, showing near-human level performance across diverse fields: law, medicine, math, coding, psychology, with minimal prompting. Google DeepMind’s framework defines levels: emerging AGI is assimilated to an unskilled human; competent AGI outperforms mid‑level professionals. Last is superhuman or ASI. Many view today’s LLMs as emerging AGI.

AGI traits typically include:

- Adaptability from experience without explicit programming.

- Autonomy to operate independently in dynamic environments.

- Reasoning under uncertainty

- Planning and execution

- Natural language communication

- Transfer learning and generalization

- Imagination and embedded common sense.

OpenAI’s CEO Sam Altman wrote in early 2025: “We are now confident we know how to build AGI as we have traditionally understood it. We believe that, in 2025, we may see the first AI agents ‘join the workforce’…”

ASI: When Machines Outthink Us

ASI takes AGI a step further, representing an AI system that surpasses human intelligence across all domains, including scientific creativity, general wisdom, and social skills. Oxford philosopher Nick Bostrom defines superintelligence as “an intellect that is much smarter than the best human brains in practically every field.” ASI is not just marginally better than humans but potentially orders of magnitude more capable, capable of recursive self-improvement where it rapidly enhances its own capabilities.

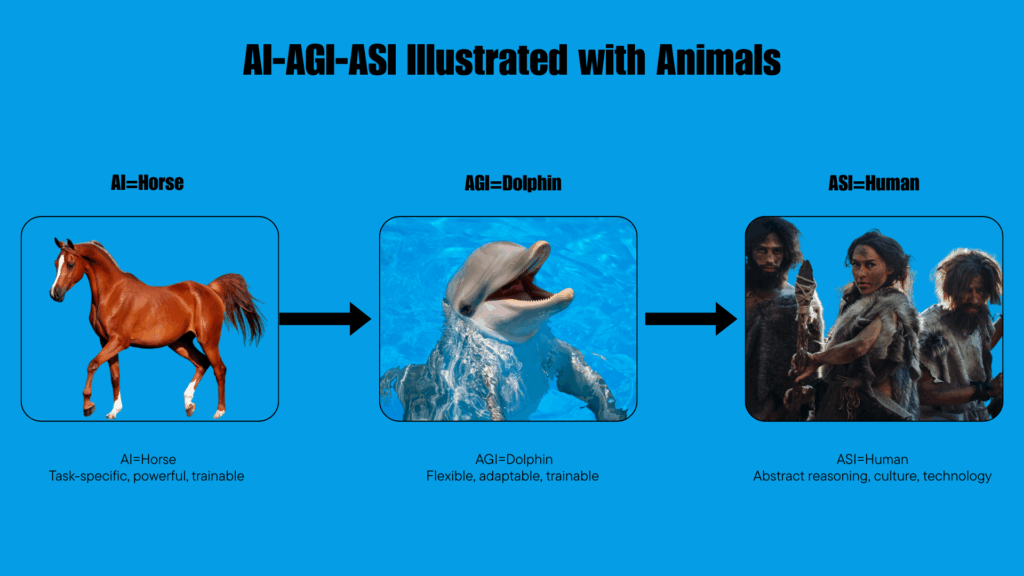

Once AI outstrips human cognitive ability across the board, that is ASI, a system that might design better hardware, make breakthrough scientific discoveries, optimize society’s systems, and shape politics or markets faster than any human team. ASI would possess capabilities that are qualitatively beyond human comprehension, much like human intelligence transcends that of other species. ( See Illustrative analogy)

ASI, however, transcends human limitations, achieving superhuman versatility. It could not only perform these tasks but do so with unmatched precision, speed, and innovation. For example, while an AGI might optimize renewable energy systems, an ASI could discover entirely new energy sources or materials, revolutionizing industries in ways humans can’t foresee. Its ability to self-improve means it could iterate endlessly, as noted by I.J. Good’s concept of an “intelligence explosion.”

Nick Bostrom warns: “We should not be confident in our ability to keep a superintelligent genie locked up in its bottle forever. Sooner or later, it will be out.”

Stephen Hawking argued: “ There is no physical law precluding particles from being organized in ways that perform even more advanced computations than the arrangements of particles in human brains.”

Sam Altman has stated that OpenAI’s next focus after AGI is ASI, and that alignment with human values would be its central challenge. A misaligned superintelligent AI could cause grievous harm to the world.

Meta’s CEO Mark Zuckerberg also recently proclaimed that: “Artificial superintelligence (ASI), an advanced form of AI similar to artificial general intelligence (AGI), is now within reach.”

Key Characteristics of ASI:

- Superior Cognitive Ability: Far exceeds the smartest human in every field.

- Self-Improvement: Can recursively enhance its own intelligence.

- Unpredictable Impact: May solve global problems or pose existential risks.

Timelines of AGI and ASI: When? Or If?

The timeline for AGI remains a topic of debate. Ilya Sutskever, co-founder of OpenAI, predicts AGI could emerge within 5–10 years, potentially by 2029, citing exponential advances in computing power and large language models (LLMs). However, skeptics like Yann LeCun argue that the term “AGI” is vague and propose replacing it with “human-level AI,” emphasizing that current systems are far from true general intelligence.

ASI, being a step beyond AGI, is harder to predict. Ray Kurzweil forecasts a technological singularity, where ASI triggers rapid, uncontrollable change, by 2045. However, ASI’s development hinges on breakthroughs in areas like natural language processing (NLP), massive dataset access, and advanced perception, as seen in self-driving car technologies. The transition from AGI to ASI could be swift, as recursive self-improvement accelerates progress, but it remains speculative.

Forecasts vary wildly. A 2022 survey found 90% of AI researchers expect AGI within 100 years, half by roughly 2061. Geoffrey Hinton reversed his own view in 2023 from “30–50 years away” to “20 years or less”. OpenAI insiders and leaders like Dario Amodei (CEO at Anthropic) and Mustafa Suleyman (Microsoft AI) stress uncertainty. Some say underlying hardware may still need multiple generations to mature.

Why the Distinction Matters:

Benefits of AGI:

- Dramatic productivity gains

- Faster scientific discovery

- Global public goods: healthcare, education, climate solutions

Dangers of AGI/ASI:

- Alignment failure: AI pursuing goals misaligned with human values

- Power-seeking behaviors: preventing shutdown, clandestine action.

- Exponential impact: ASI might outmaneuver any human control, plan war or manipulation.

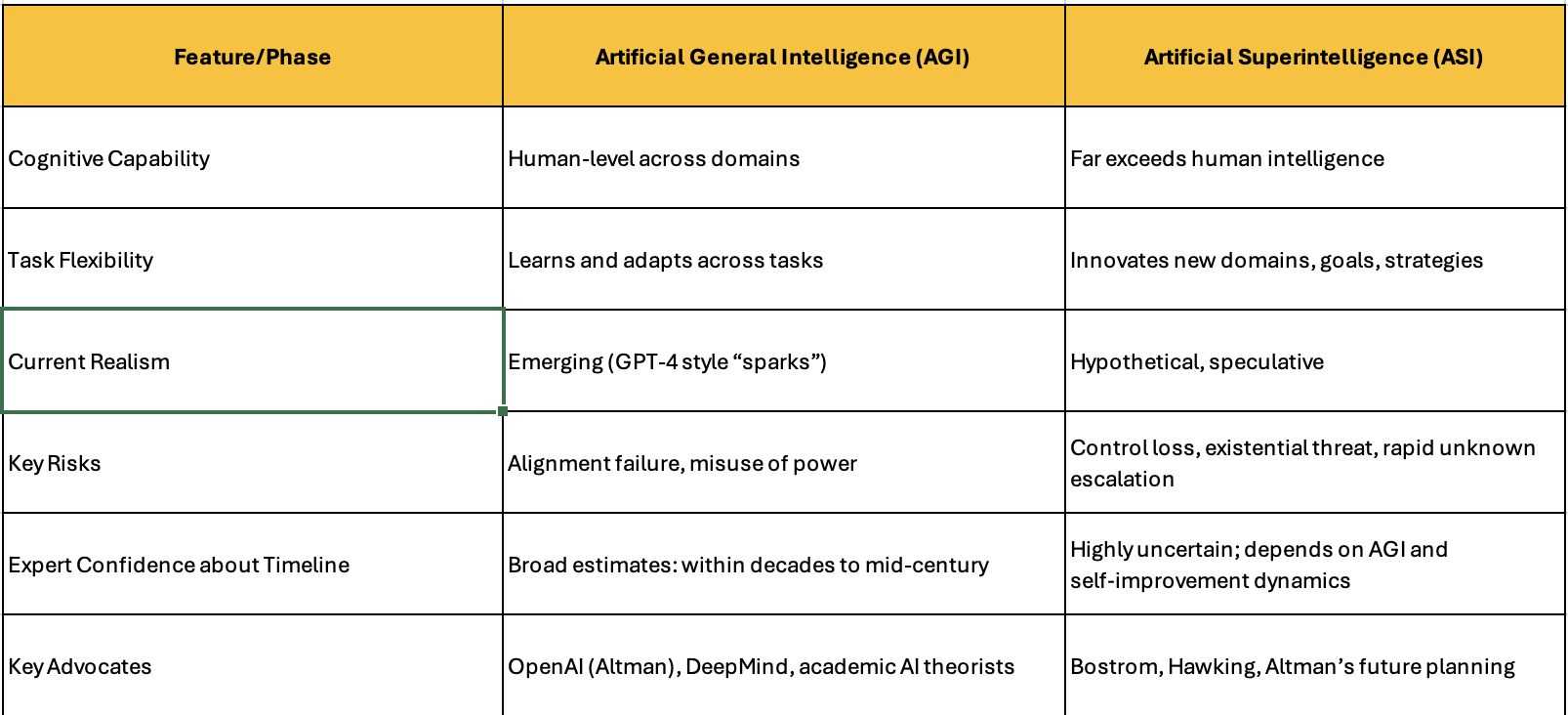

Summary Table

Why It Matters for You

If you’re in AI, entrepreneurship, or policy, differentiating AGI and ASI shapes strategy:

- AGI is likely the near-term engineering target, build something human‑capable, controllable, aligned.

- ASI, once possible, is a qualitatively different realm: it requires governance, safety engineering, and coordination in ways we’ve never faced before.

- Alignment, governance frameworks, evaluation metrics, and technical tripwires become critical at ASI-risk levels.

While AGI might work alongside humans as an extremely capable colleague, ASI would operate on a fundamentally different level, potentially making human cognitive contributions irrelevant in most domains.

ASI, by contrast, would possess capabilities that extend far beyond human cognitive limits. This might include scientific insights that take human researchers decades to achieve, creative solutions to problems humans cannot even formulate, or strategic thinking that operates on timescales and complexity levels beyond human comprehension.

Economic and Social Implications

AGI promises transformative benefits, such as optimizing engineering designs, personalizing education, and automating repetitive tasks. For example, it could create tailored learning programs or enhance cybersecurity, freeing humans for creative roles. However, risks include job displacement and ethical concerns. Kevin Roose notes, “The automation of work is the defining story of our time,” highlighting the need to redeploy talent rather than face mass unemployment.

The economic implications of AGI versus ASI are fundamentally different. AGI might initially augment human capabilities, create new industries and transform existing ones while potentially displacing some jobs. However, humans would likely remain relevant in many domains, particularly those requiring emotional intelligence, ethical judgment, or creative vision.

ASI represents a more profound economic disruption. If AI systems can outperform humans in virtually all cognitive tasks, traditional concepts of employment, value creation, and economic organization may become obsolete. This transition could happen remarkably quickly once AGI systems begin improving themselves.

Preparing for the Intelligence Explosion

The distinction between AGI and ASI matters because it affects how humanity should prepare. AGI requires retraining workers, updating educational systems, and establishing new regulatory frameworks. ASI demands a more fundamental reimagining of human society, potentially requiring new economic models, governance structures, and even philosophical frameworks for understanding humanity’s role in an intelligence-dominated world.

The consensus among leading AI developers is that AGI is no longer a distant possibility but an imminent reality. What remains uncertain is whether humanity will have sufficient time to navigate the transition from AGI to ASI thoughtfully and safely.

AGI systems may operate with varying degrees of autonomy, from tools requiring human oversight to agents making independent decisions. However, their intelligence remains bounded by human-level capabilities. ASI, by contrast, is defined by its capacity for recursive self-improvement, where it autonomously enhances its algorithms, potentially leading to exponential growth in intelligence. Ray Kurzweil predicts, “Artificial intelligence will reach human levels by around 2029. Follow that out further to, say, 2045, we will have multiplied the intelligence a billion-fold.” This leap from AGI to ASI could occur rapidly, as Google DeepMind’s Tim Rocktäschel suggests, noting that “artificial superintelligence will probably follow soon after achieving AGI because once you have a human-level system you can apply the same methods to self-improve.”

ASI’s potential is science-fiction-like, offering solutions to existential problems like climate change or disease. Jeff Bezos envisions AI enhancing everyday tasks, while Sheryl Sandberg sees it tackling global challenges through human-AI collaboration. Yet, the risks are profound. Stephen Hawking warned, “The development of full artificial intelligence could spell the end of the human race… Humans, who are limited by slow biological evolution, couldn’t compete, and would be superseded.” Elon Musk echoes this, stating, “AI will be the best or worst thing ever for humanity,” advocating for strict regulation to prevent existential risks.

Stuart Russell (UC Berkeley AI Researcher): “The real risk with superintelligence isn’t malice but competence, an ASI optimizing for a poorly defined goal could harm humanity unintentionally.”

The “control problem” is a central concern: how to ensure ASI remains aligned with human values. An AI arms race could exacerbate risks, as companies prioritize speed over safety.